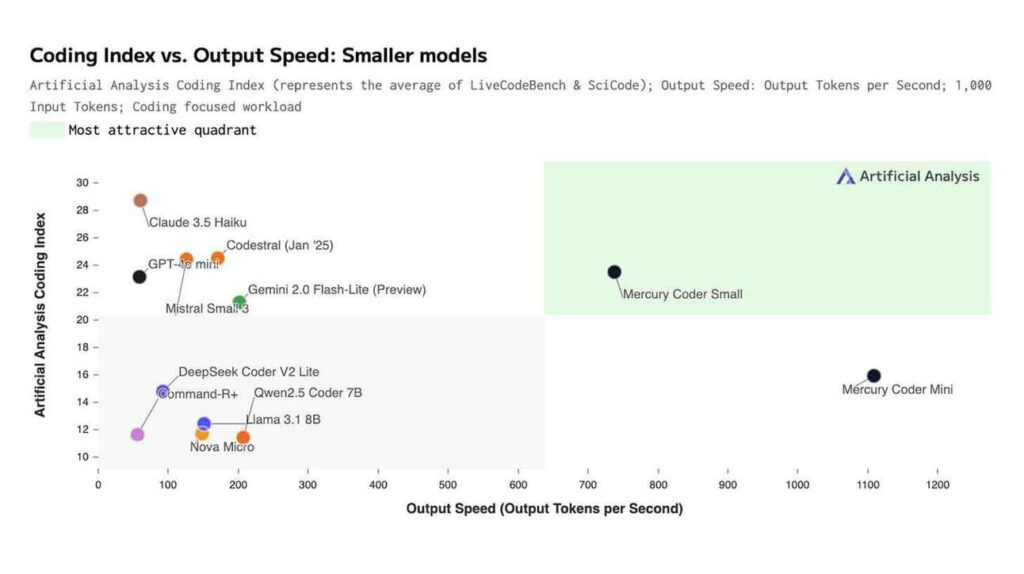

The AI race is no longer just about raw power—it’s about efficiency. A newly surfaced graph comparing various small AI models, including Claude 3.5 Haiku, GPT-4.0, Mistral Small, LLaMA Mercury Coder, and more, highlights a crucial tradeoff in AI: coding intelligence vs. output speed. As AI tools become integral to coding workflows, developers and businesses alike must understand the strengths and weaknesses of these compact but mighty models.

The Coding Index vs. Output Speed Tradeoff

At the heart of this discussion lies the relationship between artificial coding intelligence (measured on the Y-axis) and output speed (tokens per second, measured on the X-axis). On one end, we have ultra-fast models that churn out text rapidly but may struggle with complex reasoning, and on the other, more capable AI coders that sacrifice speed for accuracy.

- Claude 3.5 Haiku & GPT-4.0: These models show a balance between intelligence and speed. GPT-4.0, for instance, has proven itself a powerhouse in reasoning and context retention, making it ideal for tasks requiring deep understanding. Claude 3.5 Haiku, on the other hand, is known for its strong performance in structured problem-solving while maintaining relatively high output speeds.

- Mistral Small & Novie Micro: Positioned on the high-speed side of the spectrum, these models prioritize output speed. If you’re generating boilerplate code, handling repetitive scripting tasks, or simply need a fast AI assistant that doesn’t get bogged down in complex logic, these lightweight models shine.

- LLaMA Mercury Coder (Small & Mini): These models are designed specifically for code generation, and they strike an interesting balance between intelligence and efficiency. While not the fastest on the graph, their ability to produce high-quality code with minimal hallucination makes them appealing for programmers looking for accuracy without needing a high-powered AI.

Why Does This Matter?

For developers, choosing the right AI model depends on the type of work at hand. If you’re debugging intricate code structures or optimizing algorithms, a slower but smarter AI model may be the better choice. However, if you need rapid code generation, automation, or simple script-writing, a speed-optimized model can save you valuable time.

Businesses should also take note. AI models are increasingly integrated into coding platforms, and selecting the right one can improve developer productivity and reduce overhead costs. Startups and companies looking to scale should consider the cost-benefit ratio of using a high-speed vs. high-intelligence AI model for different tasks.

The Future of AI Coding Models

As AI research progresses, the next generation of models is likely to blur the lines between speed and intelligence. Advancements in architecture and fine-tuning will make it possible to have compact models that are both fast and smart, reducing the need for developers to make trade-offs.

For now, understanding the nuances of these models allows users to make informed choices, optimizing their workflow and staying ahead in the rapidly evolving landscape of AI-assisted coding.